There's a tempting new workflow making the rounds in UX and product teams. It goes something like this: use AI to generate personas at the start of a project, design your product, then use AI to evaluate it at the end. Ship. Done.

It sounds efficient. It might even feel rigorous. But there's a name for what you've actually built, and it's not a research process.

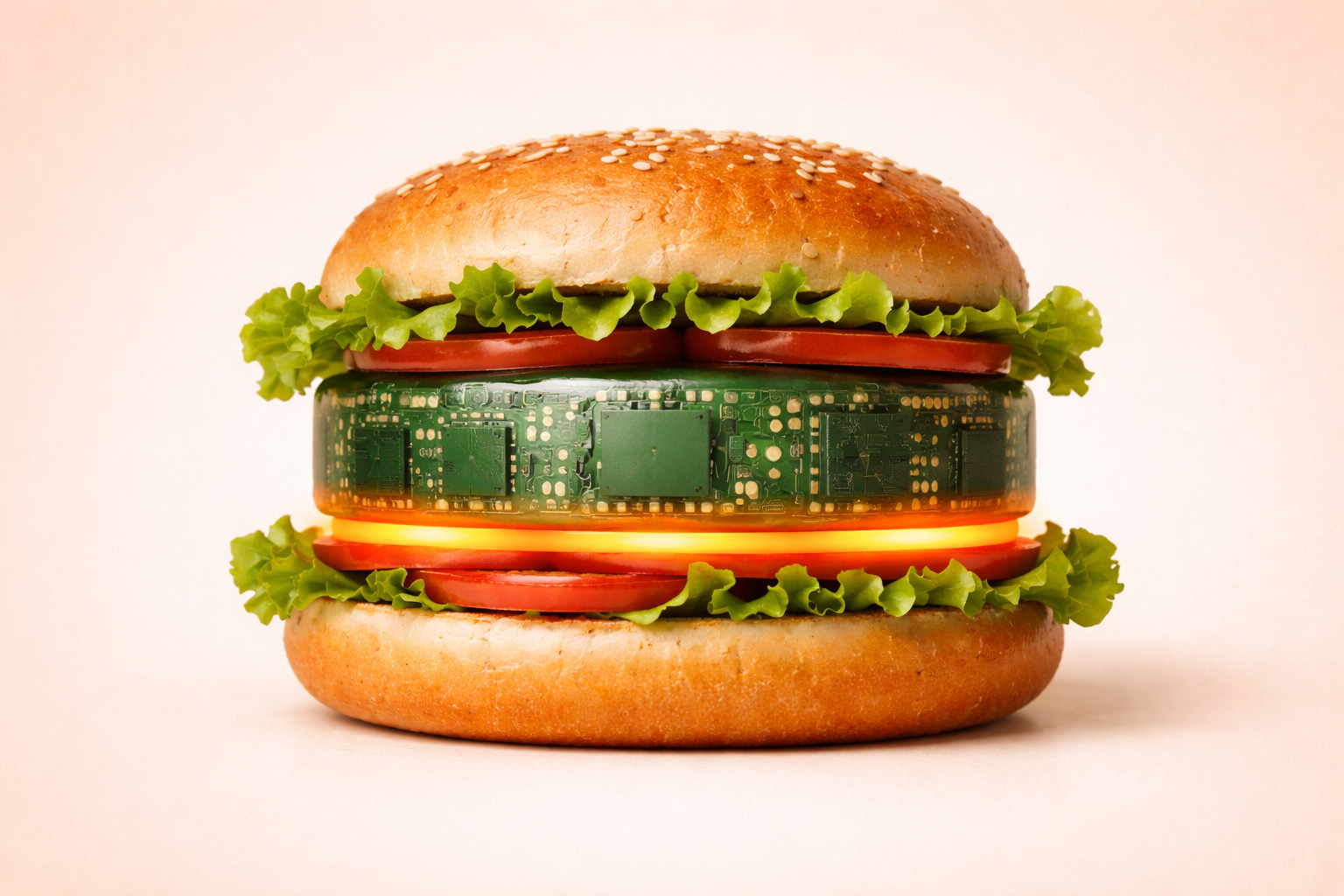

It's an AI sandwich.

(Spoiler: setting up a real user test takes about 60 seconds. The sandwich takes longer to justify.)

What's an AI Sandwich?

The AI sandwich looks like this:

- 🍞 Top slice of bread: Synthetic users, AI-generated personas, or prompting ChatGPT to "think like a customer"

- 🥗 The filling: Your actual research and design work

- 🍞 Bottom slice of bread: Asking an AI to rate the usability of your finished design

Real people? Nowhere in sight. You've replaced them - top and bottom - with AI proxies.

And what you're left with is a sandwich with no real substance.

The Top Slice: Synthetic Users Aren't Users

It starts innocently enough. You need to understand your users but don't have time for recruitment. So you ask ChatGPT to generate five personas, or you fire up a synthetic user tool that simulates how a "35-year-old project manager" might think about your product.

The output looks convincing. The personas have names, goals, frustrations, and even quotes. But here's the problem: they're a reflection of what AI has learned from the internet, not a reflection of your actual users.

Synthetic users can't tell you about the workaround your customers have been quietly using for months. They can't express the specific frustration of someone who's been burned by a confusing onboarding flow. They don't carry the lived context, habits, or emotional history that real users bring to every interaction.

You're not learning about your users. You're learning about an AI's best guess at what a user might be like.

The Bottom Slice: AI Can't Feel Confused

On the other end of the sandwich, teams are increasingly asking AI to evaluate their designs. "Here's our checkout flow, does this seem usable?" The AI obliges with structured, professional-sounding feedback.

But usability isn't a logic puzzle. It's deeply human.

AI can check whether a button label is clear in a literal sense. It cannot tell you whether a first-time user will hesitate, second-guess themselves, or quietly give up. Not because the words are wrong, but because something feels off. That feeling is data. And it's data that only real humans generate.

Consider something as simple as sarcasm. If a user says "Oh, great, another error message" while testing your product, a human moderator understands the frustration immediately. An AI evaluating the same transcript might flag the word "great" as positive sentiment. AI models are trained to process language statistically, not to understand the full texture of human communication.

What AI Is Actually Good For

None of this is to say AI has no place in your UX process. It absolutely does. Just not at the edges, where human understanding is most critical.

AI is genuinely useful in the middle of the research process:

- Synthesizing and summarizing large volumes of user research you've already collected — tools like AI Insights are built exactly for this

- Creating your tasks or complete user test scripts as a starting point

- Analyzing patterns across transcripts you've reviewed — automated transcripts make this dramatically faster

- Sharing key moments with your team quickly using automatically cut clips

- Automating the tedious parts of reporting as automated test reports do the heavy lifting so you can focus on the insights

Think of AI as a powerful research assistant, not a replacement for the researcher, and certainly not a replacement for the humans being researched.

The Only Thing an AI Can't Simulate Is a Human

At the heart of the AI sandwich problem is a seductive illusion: that intelligence-sounding output is the same as genuine human insight.

It isn't.

Your users are unpredictable. They're distracted. They make assumptions you never anticipated. They bring emotional baggage from previous products they've used. They misread things in ways that reveal profound truths about your design. They're sarcastic, impatient, delightful, and surprising.

AI can describe a hypothetical version of that. But only a real user is that.

Keep the Bread Real

The next time you're tempted to skip user recruitment by generating synthetic personas, or to save time by asking ChatGPT to evaluate your prototype, ask yourself what you're actually optimizing for.

If the answer is confidence in your design decisions, there's only one way to get it.

Put real people at the top and bottom of your process.

Let AI help with everything in between.