A typical usability test may return over 100 usability issues. How can you prioritize these issues so that the development team knows which are the most serious ones?

Below, I describe three ways to ensure you find and fix those issues with the most significant impact on your users’ experience.

How to log usability issues

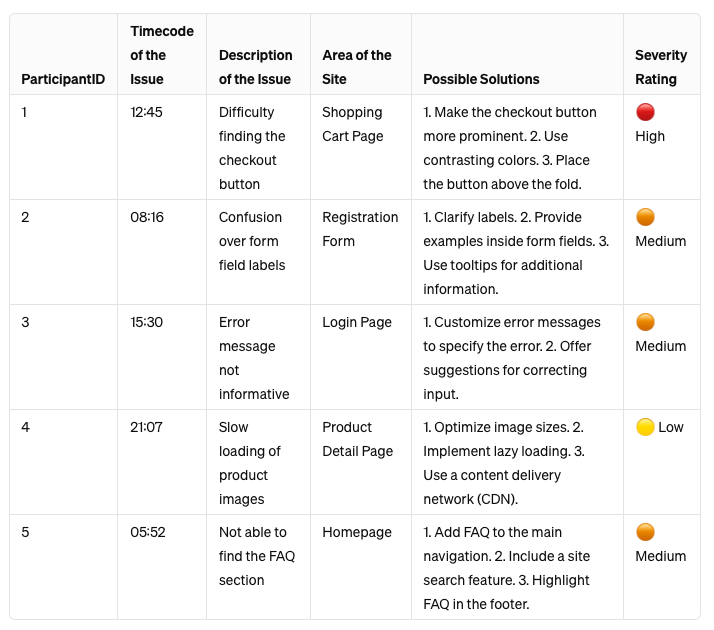

Before you can even start to think about prioritizing usability issues, there is something you need: A list of usability issues. (Surprise, surprise!)

While talking to our Userbrain clients, we learned about many different things they capture, but these are the most common things they note down:

- ParticipantID (e.g. Tester 1)

- Timecode of the issue (If you have a video recording of your session)

- A brief description of the issue

- The area of the site where the issue occurs (e.g., “Product Detail Page”)

- Possible solutions

- The severity rating of the issue

I’d recommend writing down at least a short description of the issue spotted and the timecode of the video recording so that you can find the issue again later. Here’s an example:

If you’re using a user testing tool like Userbrain you can log usability issues, including time codes, streamlining the process significantly directly:

This feature allows you to jump straight to the exact moment of the issue in the video, tag its severity for easy organization, and filter through it efficiently.

Moreover, there’s the added convenience of exporting all logged issues into an Excel sheet or CSV file, making it easier to analyze and share insights with your team.

How to classify the severity of any usability problem

As David Travis points out, you can classify the severity of any usability problem into trivial, minor, major, or critical by asking just three questions:

Question 1: Does the problem occur on a red route?

Red routes in UX are critical tasks that users frequently perform to achieve their goals on a website, app, or system.

These are the paths users take to complete actions that are vital for both their success and the business’s success.

If a usability problem occurs on a red route, it is likely to impact user satisfaction and business outcomes significantly.

Some examples of red routes:

- E-commerce site: Completing a purchase from adding a product to the cart to successfully checking out.

- Banking app: Transferring money from one account to another or paying a bill directly from the account overview.

- Social media platform: Posting an update, including writing the post, adding any media, and successfully publishing it.

Question 2: Is the problem difficult for users to overcome?

This question assesses the level of difficulty users face when encountering the problem.

If a problem significantly hinders users from completing their tasks or requires a workaround that is not intuitive, it’s considered difficult to overcome.

Question 3: Is the problem persistent?

A persistent problem is one that consistently occurs across sessions, devices, or user segments.

This indicates that the problem is not an isolated incident but a systemic issue that affects a broad range of users or occurs under various conditions.

Now simply count the “yes” responses to the three questions:

- 0️⃣ Yeses: It’s a visual hiccup. Annoying? Sure. Urgent? Not so much.

- 1️⃣ Yes: It’s a minor glitch. Noticeable but not a showstopper.

- 2️⃣ Yeses: It’s a major hurdle. Users are tripping up, and we need to pave the path.

- 3️⃣ Yeses: Red alert! It’s a full-blown usability crisis needing immediate attention.

Critical usability problems, therefore, occur on a red route, are very difficult to overcome, and are very persistent for the user. You should start by fixing these problems immediately.

The task completion spreadsheet

One other method of prioritizing usability issues is the task completion spreadsheet.

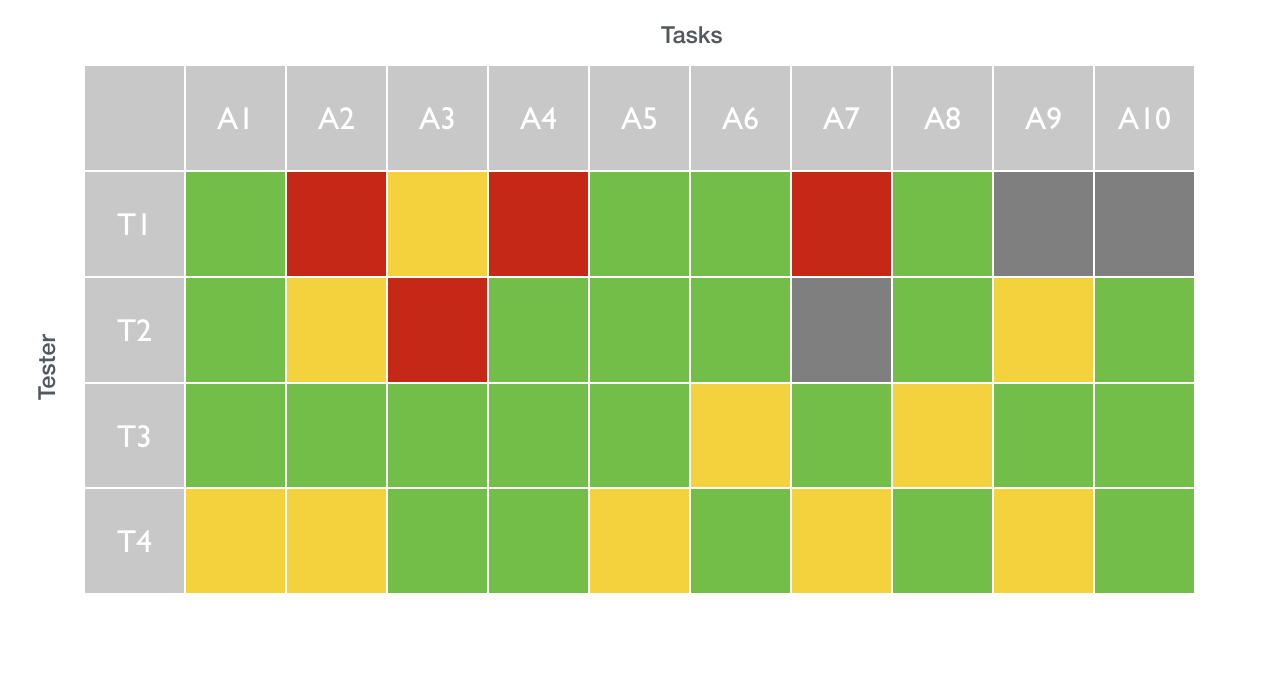

I often use this method when presenting usability study results to our clients. It requires that you capture the task completion in your user tests. On the horizontal axis, you lay out all the different tasks. On the vertical axis, the individual participants.

In the example below, we’ve tested ten tasks. The vertical axis shows the tasks, and the horizontal axis shows the test participants:

You then show the success rate of every task and every tester by using one of three colors:

- Green: The tester had no problems performing the task assigned.

- Yellow: Minor issues that the tester could resolve without any external help.

- Red: The tester couldn’t accomplish the task.

- Gray: The tester couldn’t start with the task (e.g., the Internet connection is not working).

This is a great way to spot problematic tasks (marked red) for most participants.

You should then try to discover why these tasks were problematic and focus on resolving these issues first.

The rainbow spreadsheet

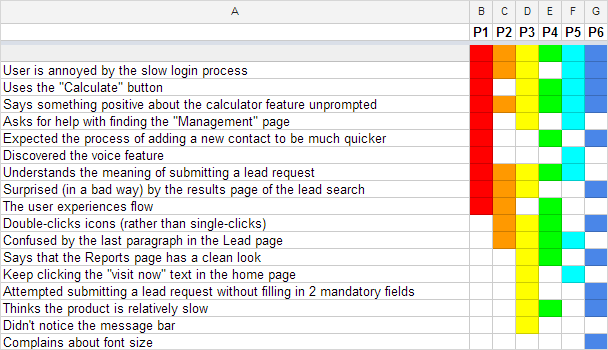

If you haven’t read It’s our research by Tomer Sharon I really encourage you to do so. In his book, Tomer presents another great way of capturing and prioritizing usability issues – via a rainbow spreadsheet:

How prioritizing usability issues with the rainbow spreadsheet works:

- This visualization is quite similar to the task completion spreadsheet – you just display issues instead of tasks on the vertical axis.

- Repeated observations are highlighted in different colors.

- The more “colorful” an issue, the more critical it is to fix it.

You can download his spreadsheet here.

Are there any tools or software recommended for tracking and managing usability issues over time?

For tracking and managing usability issues, tools like JIRA, Trello, and Asana are popular among teams. These tools help prioritize and organize issues based on severity, impact, and other criteria. User testing platforms like Userbrain offer insights directly from users and provide a way to log and manage issues together with relevant video snippets.

How do you balance prioritizing usability issues with other product development tasks, such as adding new features or fixing technical bugs?

Balancing the prioritization of usability issues with other development tasks requires a strategic approach. Teams often use frameworks like MoSCoW (Must have, Should have, Could have, Won’t have this time) or the RICE scoring model (Reach, Impact, Confidence, Effort) to evaluate and prioritize tasks based on their importance, impact, and resource requirements.

Summing up

Finding the low-hanging fruit in your list of usability issues isn’t hard. But while some problems are obvious during testing, others may only become visible after you have closely examined your data.

The above methods show when a problem actually is a problem, especially if you are responsible for communicating these insights to the rest of your team.

How do you decide which usability issues to tackle first? I’ll be happy to hear about your workflows.